SwaggerHub is one of SmartBear’s flagship products, which allows for API design, documentation, management, and testing. SwaggerHub is offered as both a SaaS subscription platform and as an On-premise / Cloud solution. It also allows for interactive API documentation, endpoint design, collaborative features, and API functionality validation. SwaggerHub significantly simplifies the process of API development and documentation for software teams with its support for the OpenAPI specification.

Consumers often struggle with version tracking, providing feedback, and getting help, while API discoverability remains a major challenge for both providers and users. Although SwaggerHub offers some API documentation space, it's too limited—typically just a single sentence—falling short of conveying full functionality. Competitors are already addressing this gap in SmartBear's portfolio, making it crucial for improvement.

Providers:

Providers create and maintain the API. They need comprehensive documentation, clear examples, version management, interactive elements, and security information. They also want to monetise their APIs.

Consumers:

Consumers use the API for development. They require clear usage instructions, quick onboarding, error handling, code samples, interactive testing, and updates/versioning details.

Design Thinking is a dynamic, iterative approach that empowers us to challenge assumptions, ask questions, and foster innovative thinking. The goal is to make products, services, and processes better by actively involving users in the entire process. This is a process we used throughout.

Design sprints are used to rapidly solve problems, test ideas, and develop solutions in a short, focused timeframe—usually five days. This process encourages collaboration, reduces risks, and accelerates innovation by quickly validating concepts with real user feedback before committing to full development. It helps teams stay aligned, make faster decisions, and iterate efficiently. We opted for Design Sprint 2.0, which is an updated 4-day process. The original Design Sprint principles were optimised so that instead of 5 days, it takes 4.

We had two platforms to build to complete an API documentation portal - The provider and the consumer side. We weren’t just building our own API documentation portal but rather a solution that our customers could use and adapt to reflect their branding, fonts and colours. It had to be intuitive. There was much discussion and conflicting views about which side we should work on first - provider or consumer? Ultimately, we decided to start with the consumer side because it was much less complicated than the provider platform.

In the days before the sprint, the UX team met with the developers and product managers on Zoom to introduce them to Miro - the tool we used for the four days. We took them individually through the sprint design board, explaining the plan for each of the four days. Our introductory task was a fun one - add an image of your own or find one on Google of your favourite place to be on vacation. Add a sticky note beside your image and write about what is special about your chosen location. Each team member was encouraged to add their profile image and job description to the team section.

We started with the expert interviews. Since we have internal users of the solution that we were going to design, we decided to invite experts from our own company who understand the problem. These experts included product architects, VP and directors of UX, product and engineering and of course, our own developers (and sprint team members). While the experts talked, the team recorded the “How Might We…” (HMW) questions. The goal of this activity is to jot down all the insights and issues that experts share.

After we had organised the HMW stickies into categories and voted, we were left with four important HMW questions among them:

Day 3 began with the gallery of sketches and heat map voting. The facilitator presented each concept to the team to keep it anonymous. Each team member had 60 red dots, which we were to place next to the ideas or features we liked the most.

Consumer Platform

My design, along with the eventual winning design, had the most votes (and equal votes), which meant that the ‘decider’ had to choose between them. Interestingly, the winning design was created by one of the developers, which gave the developers a great boost. We were also able to laugh about the developers beating the designers and got slagged a lot about them having to come in and do our job for us!

After completing a design sprint, the design team (myself and an associate designer), following paper prototyping, started rapid prototyping to bring our ideas to life quickly and test them early with our users. This approach allowed us to test and validate our concepts tangibly, catching any issues early on and refining the design before committing to full development.

There was a lot of pressure on which took us into the weekend, but we got it done and ready to test by Monday morning. The senior UX manager who facilitated the design sprint helped but had to return to work in his area, so it was just two of us designing.

We had 13 participants in our usability tests. Five of the participants were external customers, and the remaining eight were internal customers. Usability tests were conducted via Zoom because of our global locations. However, for some of the usability tests we were able to conduct them in person because I self-funded a trip to Poland. This was something I had wanted to do since I started working at SmartBear. I was the only designer in Ireland, with the majority of the UX team located in Poland. I think it is really important to meet in person if at all possible because it strengthens team bonds. I really enjoyed my trip and meeting my colleagues following months of working together using Zoom.

For the most part, there were two of us conducting the usability tests. One took notes, while the other interviewed. We took turns in both tasks. We also had our product manager attend every meeting to introduce us to our customers.

I was tasked with gathering all the invaluable insights from our usability tests which we included in subsequent iterations, some of which included;

Here, I will share some aspects of my work. Hopefully, you will get a good feel for my work. Of course, I am always happy to chat and answer any questions you have about how I approach tasks.

One of the things that our customers asked for in the usability tests was private and public products as well as explanations so here is a tool top when the user hovers over the lock symbol.

I worked on Search on both the consumer and provider side. In the earlier designs, I explored various design options. I did a lot of research and came across a lot of patterns I particularly liked. Our developers love how Git Hub’s search works, so I took this on board, too, especially as developers will use our portal.

More Filtering Options

With big pressure on our developers ahead of the first release of our new product, I designed a simpler search version. However, we tested the versions above with our design partner customers, and they loved the simplicity of the proposed design.

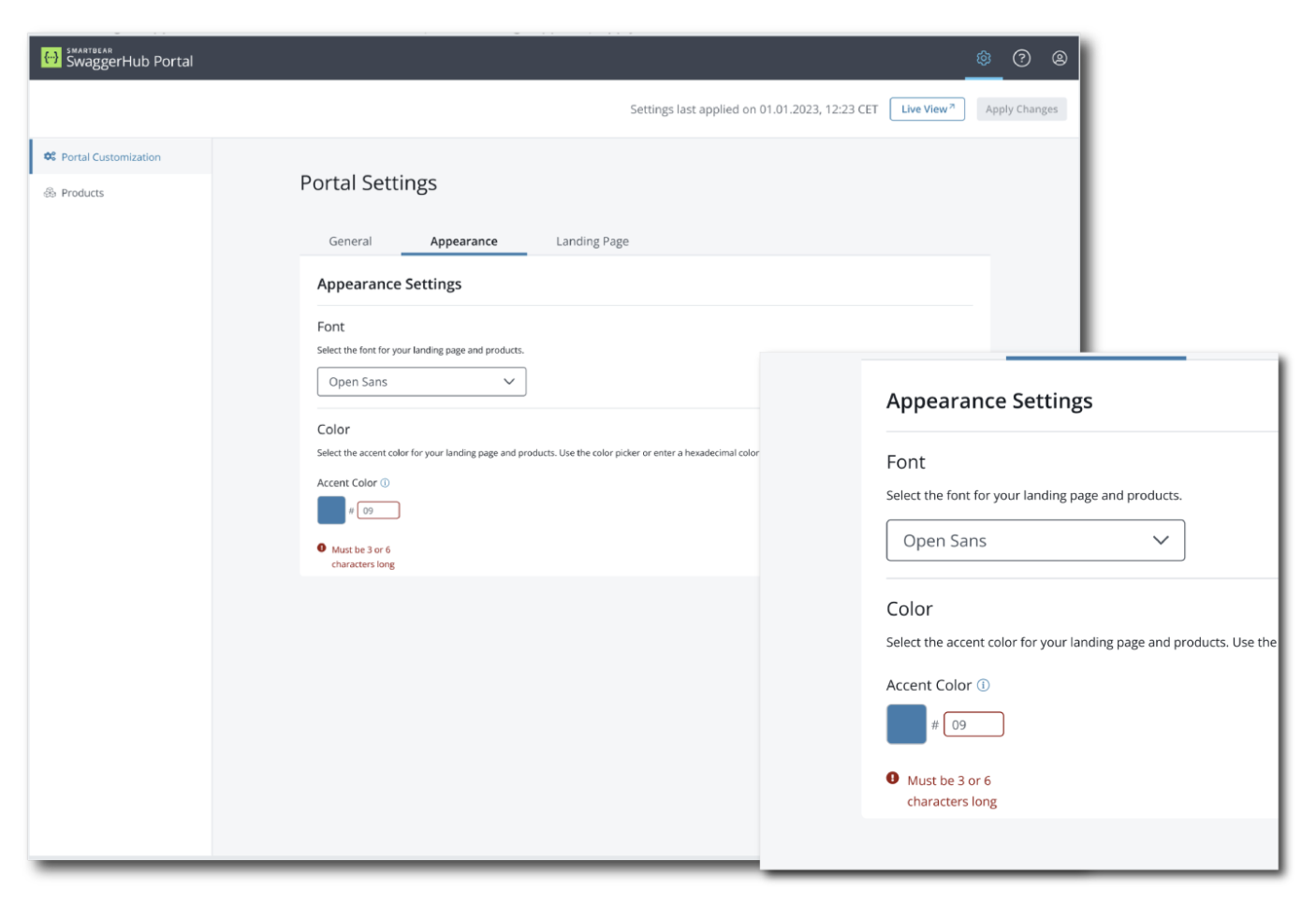

Just one of the many things I worked on was the colour picker. Our first prototype didn’t have a colour picker but allowed users to change the colours by entering a hex code. This was far from satisfactory for our customers who tested our first prototype.

Below was very basic and the user needed to know their hex code to be able to change the colours.

The hex field becomes active on click and can be edited directly in the field.

If the user leaves the Hex code field blank, it reverts to the previous code, and the colour box remains the same colour (i.e., only changes when a new hex code is entered.

On hover, a tooltip appears, which was another ask of the customers who tested our first prototype.

In the new design, I created a colour picker that opens to the right of the colour box (only) when the user clicks on edit in the colour box.

There were a few iterations on this after testing with our customer’s design partners, such as an ‘apply’ button. Initially, the new colour was implemented on closing the modal. However, the users didn’t know if their colour had been accepted, so they wanted the button.

Finally, when the user made the changes, they could view them (‘live view’) before clicking ‘apply changes’. One of the requests by many of our users was the ability to see their changes before committing to them.

The screens below demonstrate the ‘live preview’ as the user builds their product. You will have seen the resulting consumer side, and I thought it would be interesting for you to see how this is created by the provider. Our customer partners, during testing, pointed out that there was a lot of ‘white space’ in our first prototype. They suggested that we could put a live preview there so that they could get instant feedback.

A close-up of the ‘Cat World’ product when it is complete. Users can go to ‘live view’ to see how it will look on the consumer side before applying their changes.

The product can be made private with an option to show on the landing page. Some of our design partners don’t want their private visible at all, but some do.

A close-up of the ‘Cat World’ product when it is made as a private product.